Owli-AI Research

An Electronic Guide Dog for the Blind based on Artificial Neural Networks

(2021) - Paper

S. Lopatin; F. v. Zabiensky; M. Kreutzer; K. Rinn; D. Bienhaus

Technische Hochschule Mittelhessen, University of Applied Sciences, Institute of Technology and Computer Science, Giessen, Germany

Notice: this page was machine-translated and is pending editorial review.

Visual

Abstract

This paper presents a feasibility study for an electronic assistance system intended to support blind and visually impaired people in orientation and navigation in public space. The core approach is optical detection of walkable sidewalk areas using semantic segmentation with a neural network trained from scratch. In the practical implementation, an NVIDIA Jetson Nano is used as a mobile computing unit for on-device inference. Navigation cues are derived from detected sidewalk structures and output to the user as speech. The work therefore examines technical feasibility of a portable electronic guide dog based on computer vision and CNN.

Keywords

- electronic travel aid

- blind sidewalk detection

- portable ETA system

- electronic travel aid technology

- computer vision

- convolutional neural network

Download

Figures

7 visuals from the paper.

-

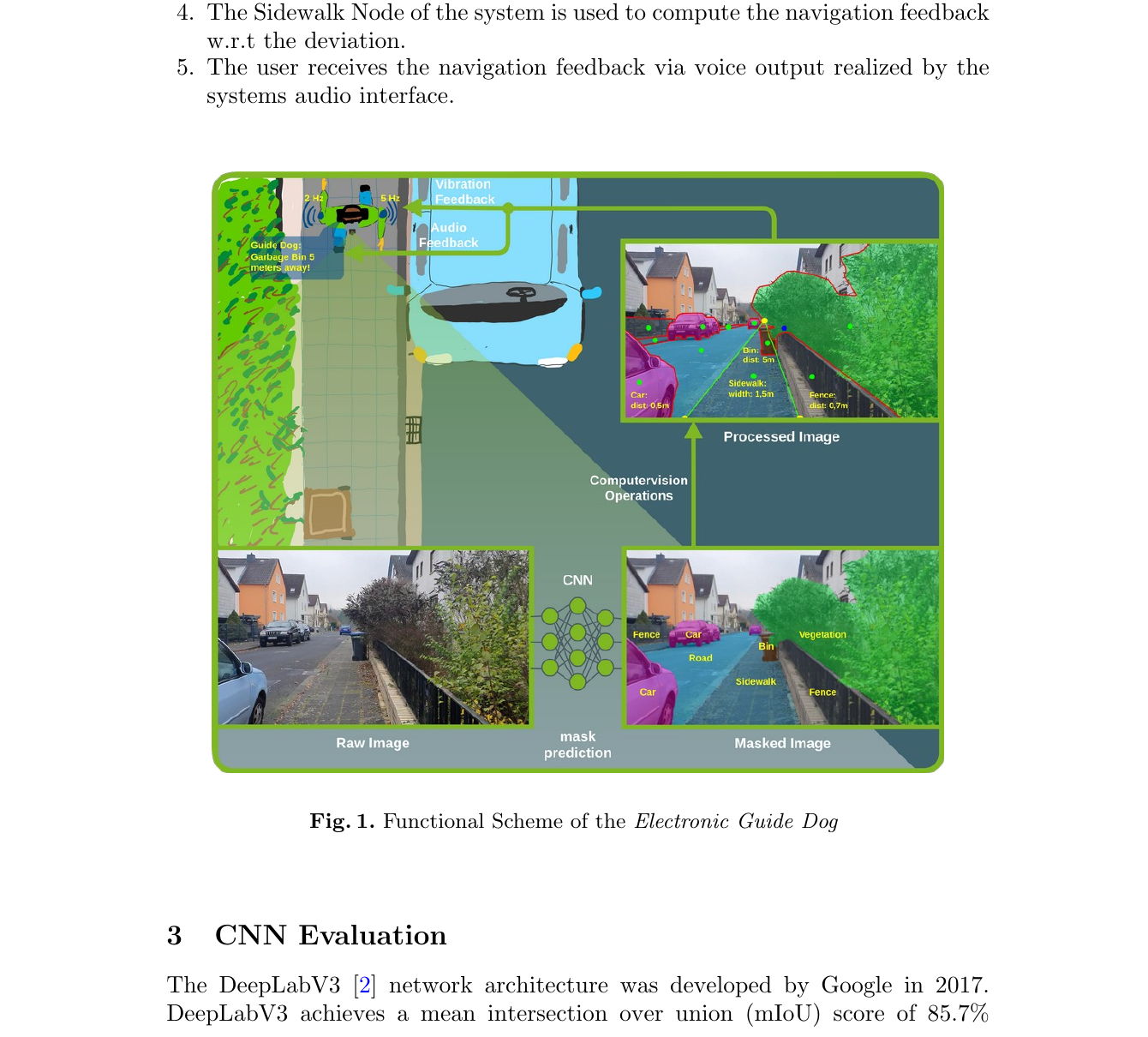

Fig. 1 Functional scheme of the Electronic Guide Dog. -

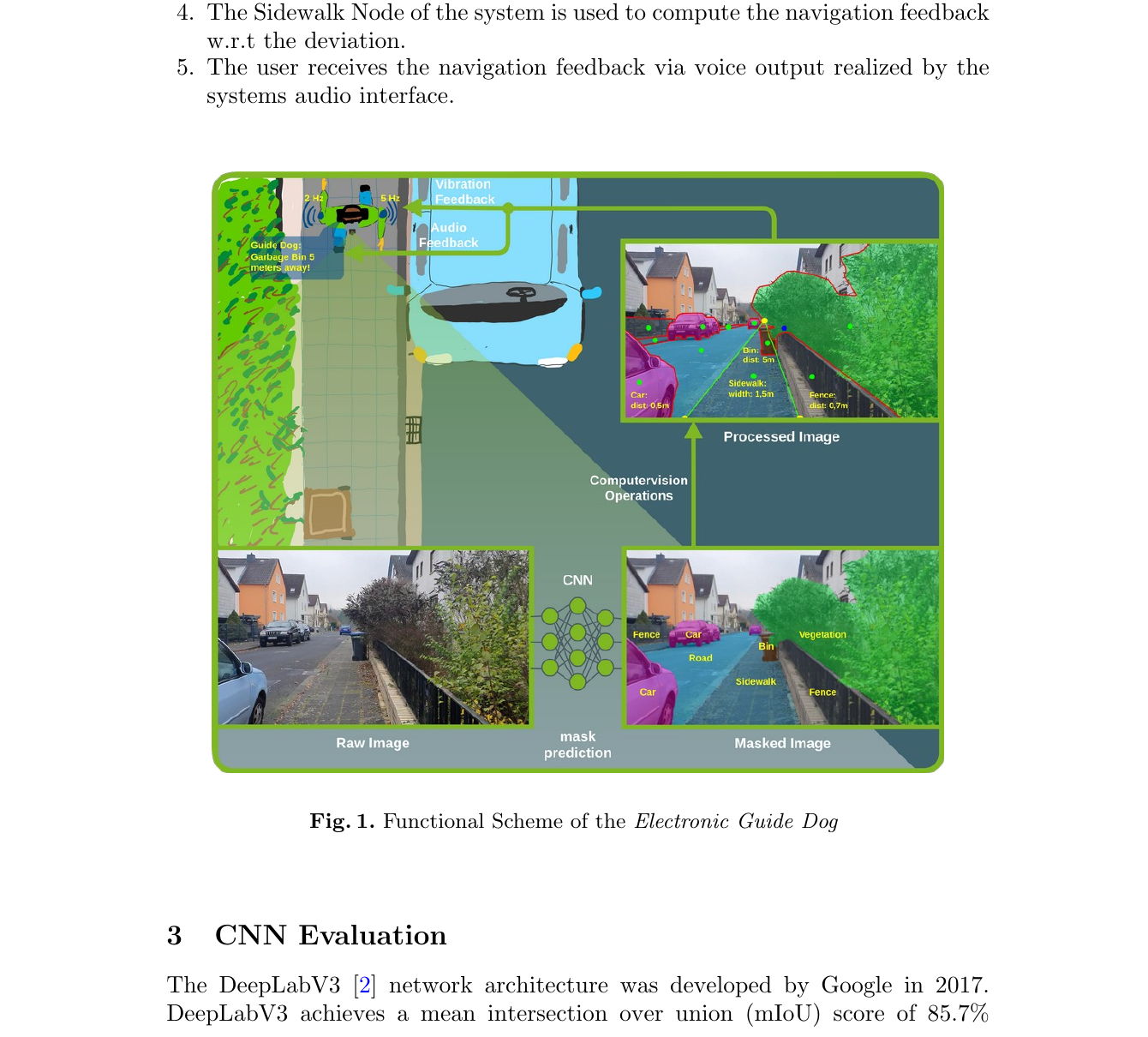

Fig. 2 System architecture with data-flow based processing. -

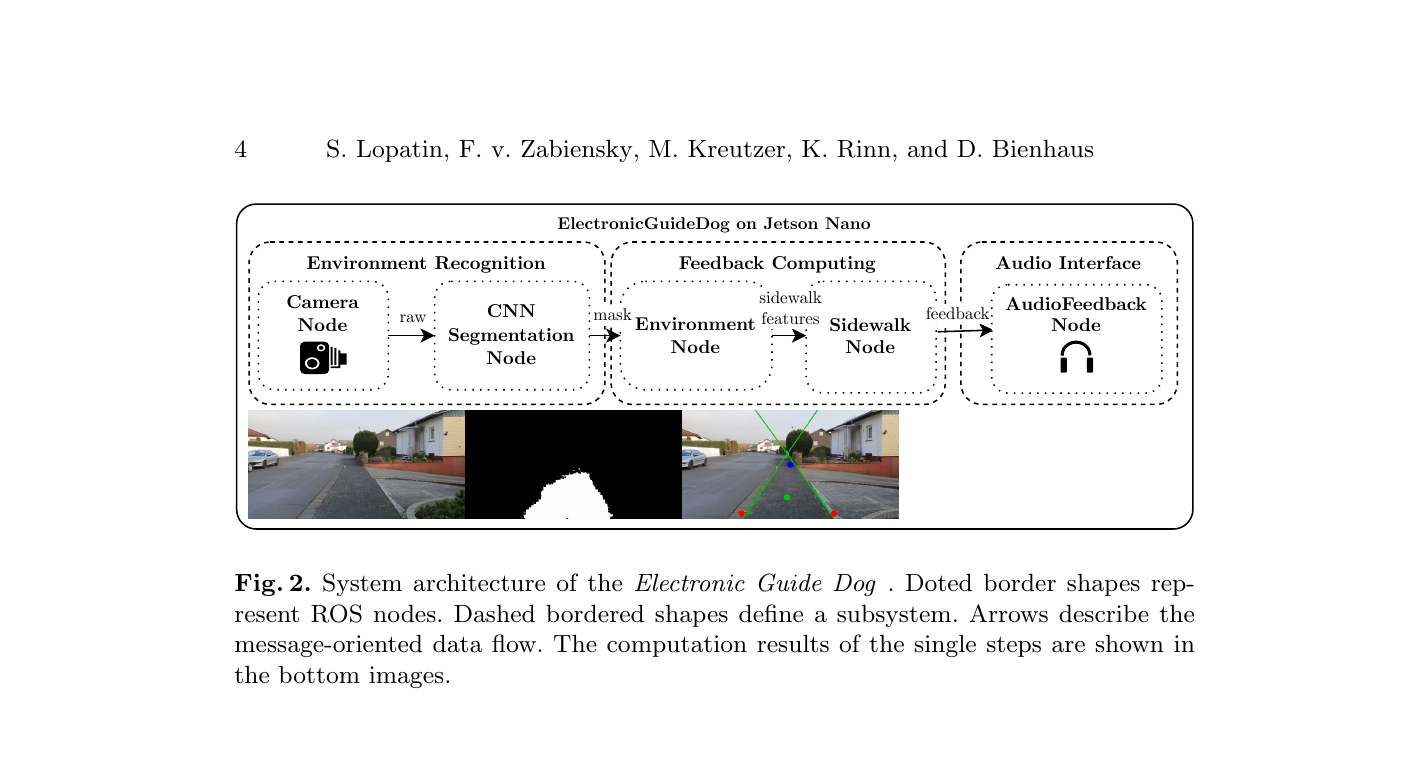

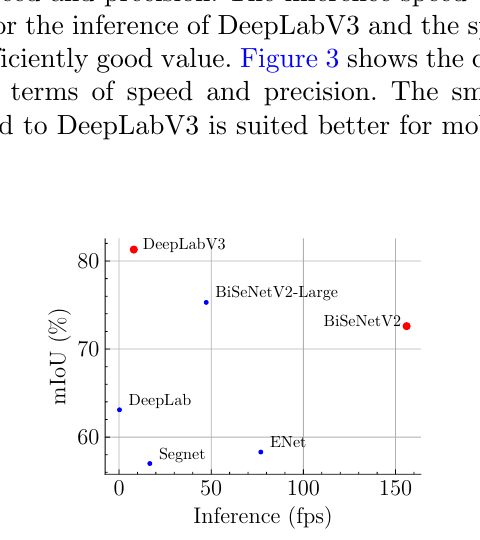

Fig. 3 Comparison of speed and precision. -

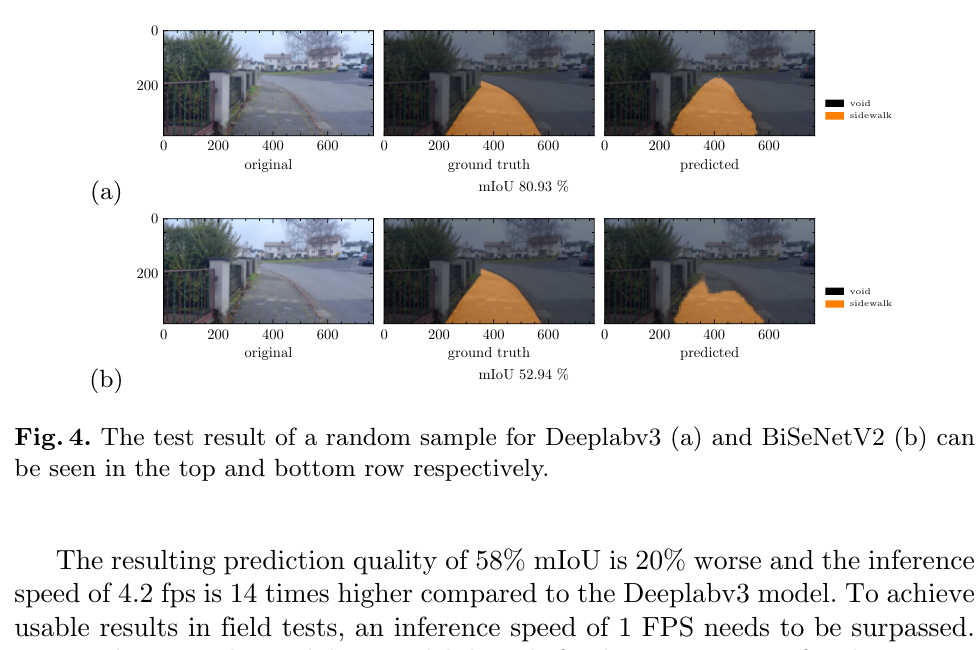

Fig. 4 Comparison result of one test sample for DeepLabV3 and BiSeNetV2. -

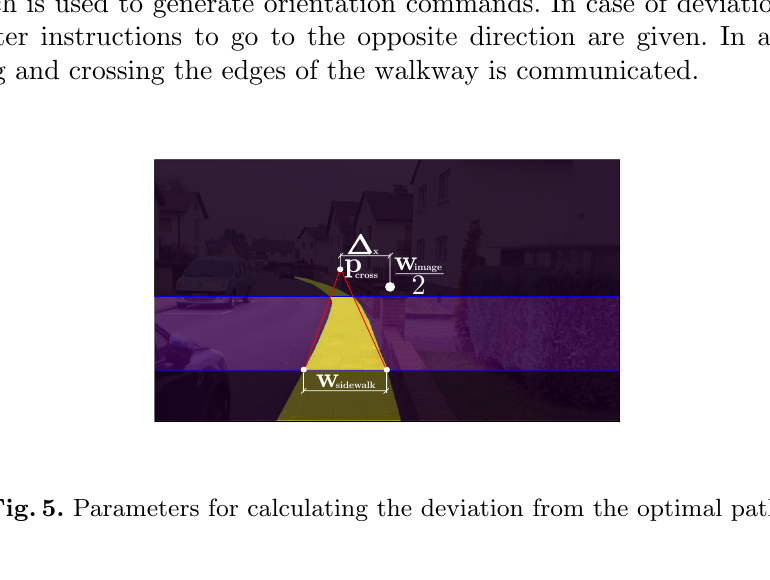

Fig. 5 Parameters for deviation calculation from optimal path. -

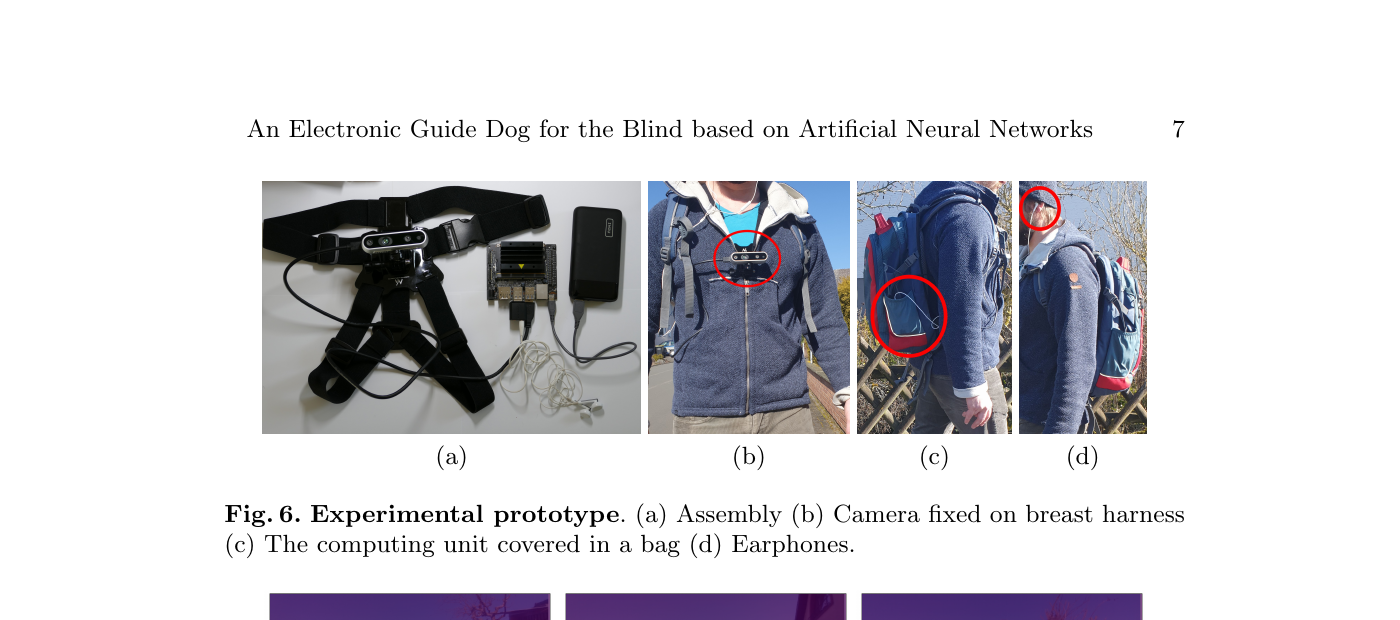

Fig. 6 Experimental prototype in field-test setup. -

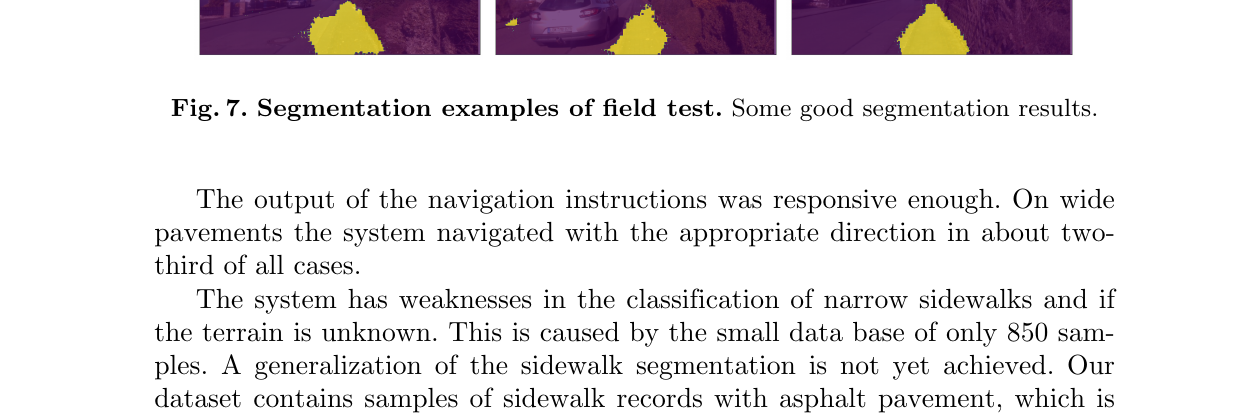

Fig. 7 Segmentation examples from field test.