Owli-AI Research Project

AHRUS Audible High Resolution Ultrasonic Sonar

AHRUS is an electronic guide-dog concept that supports echolocation through audible ultrasound. The system aims to make obstacles and structures perceivable earlier without replacing natural spatial perception with headphones.

Notice: this page was machine-translated and is pending editorial review.

- echolocation

- ultrasound

- assistive technology

- spatial hearing

- obstacle detection

Project description

Goal

The AHRUS project examines how ultrasound can be used as an additional orientation channel for blind and visually impaired people. The goal is practical support in daily life, especially for small structures and surfaces that are hard to perceive.

How it works (short)

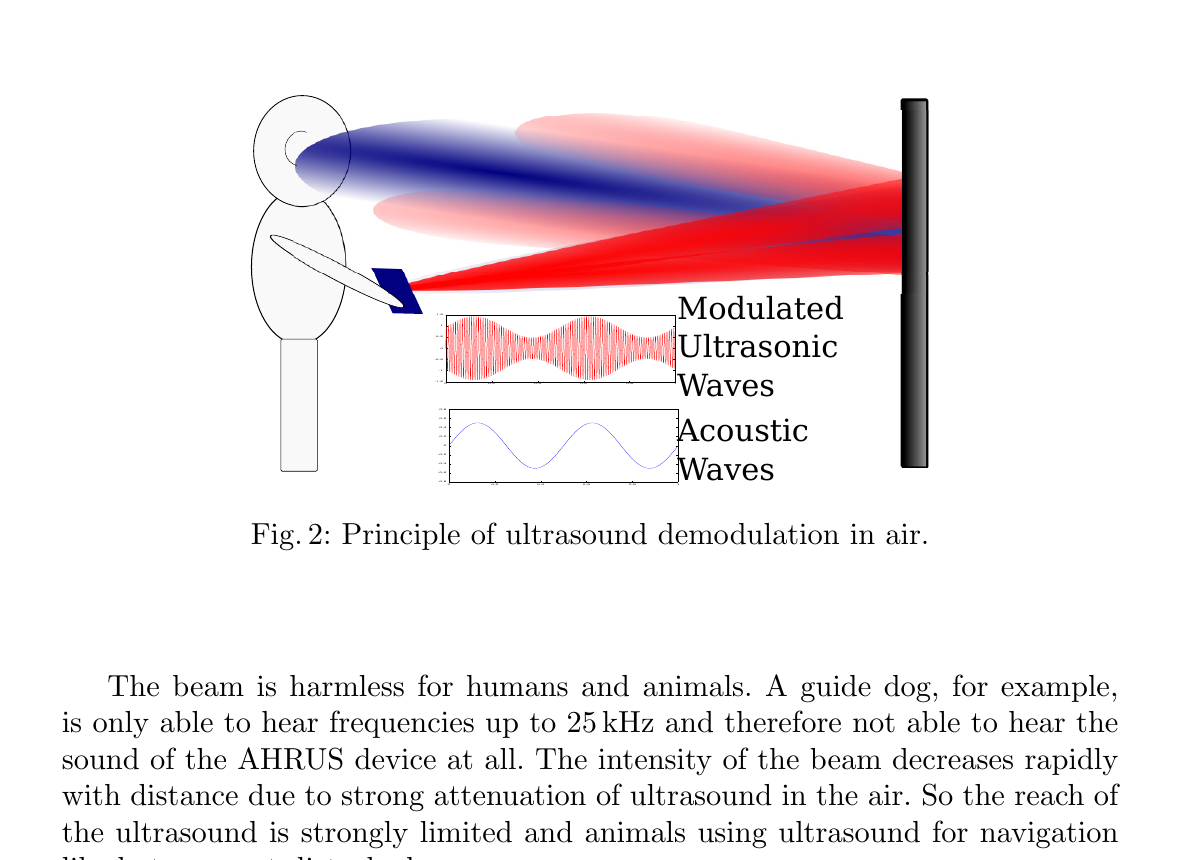

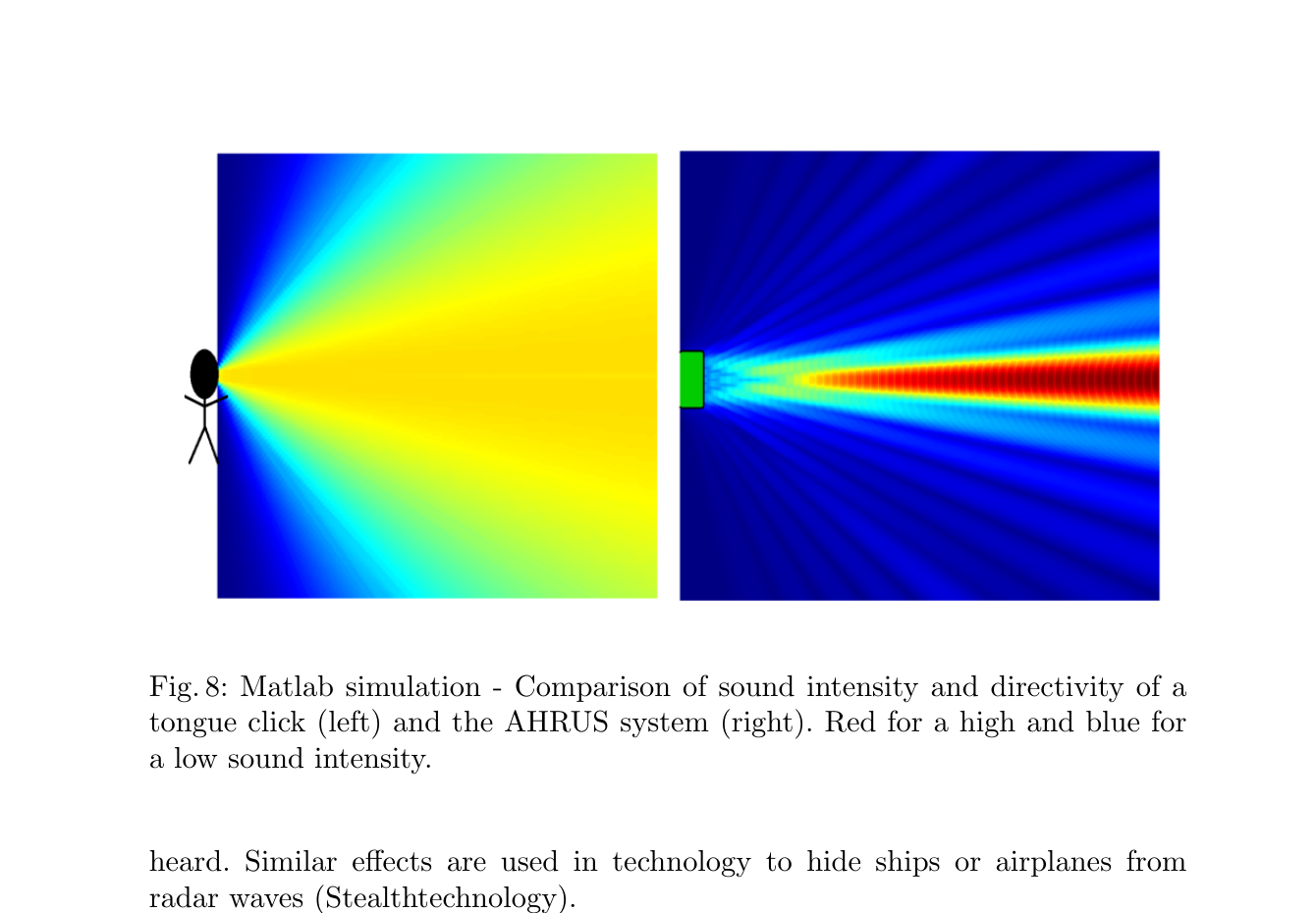

A focused ultrasound beam is emitted with modulation and is partially converted into audible signals by nonlinear acoustics in air. Reflections from objects can then be perceived with the user’s own ears and spatially classified.

What is new compared to classic echolocation?

Classic active echolocation with tongue clicks uses longer wavelengths and is therefore less selective for fine structures. AHRUS uses short ultrasound wavelengths and enables clearly directed scanning, which can improve differentiation of structure boundaries and small obstacles in specific scenarios.

Current status (prototype and first tests)

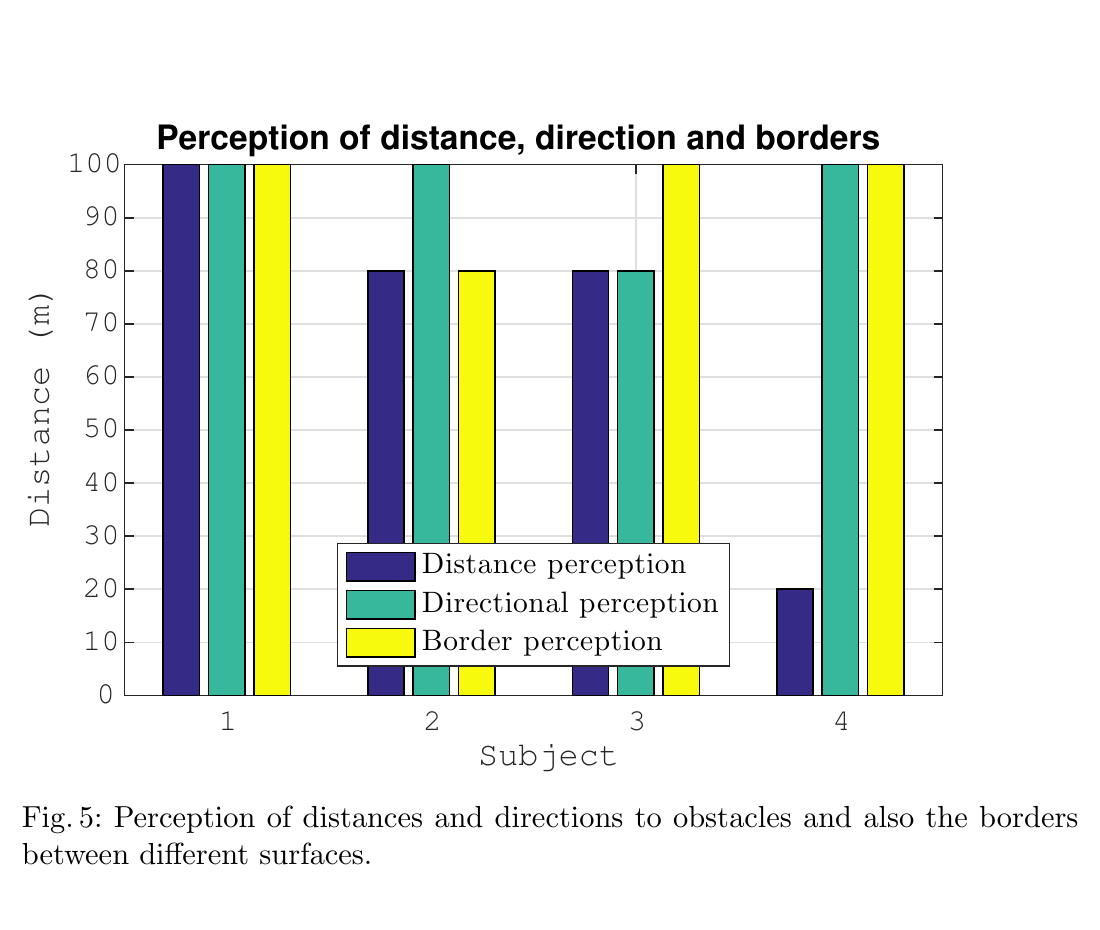

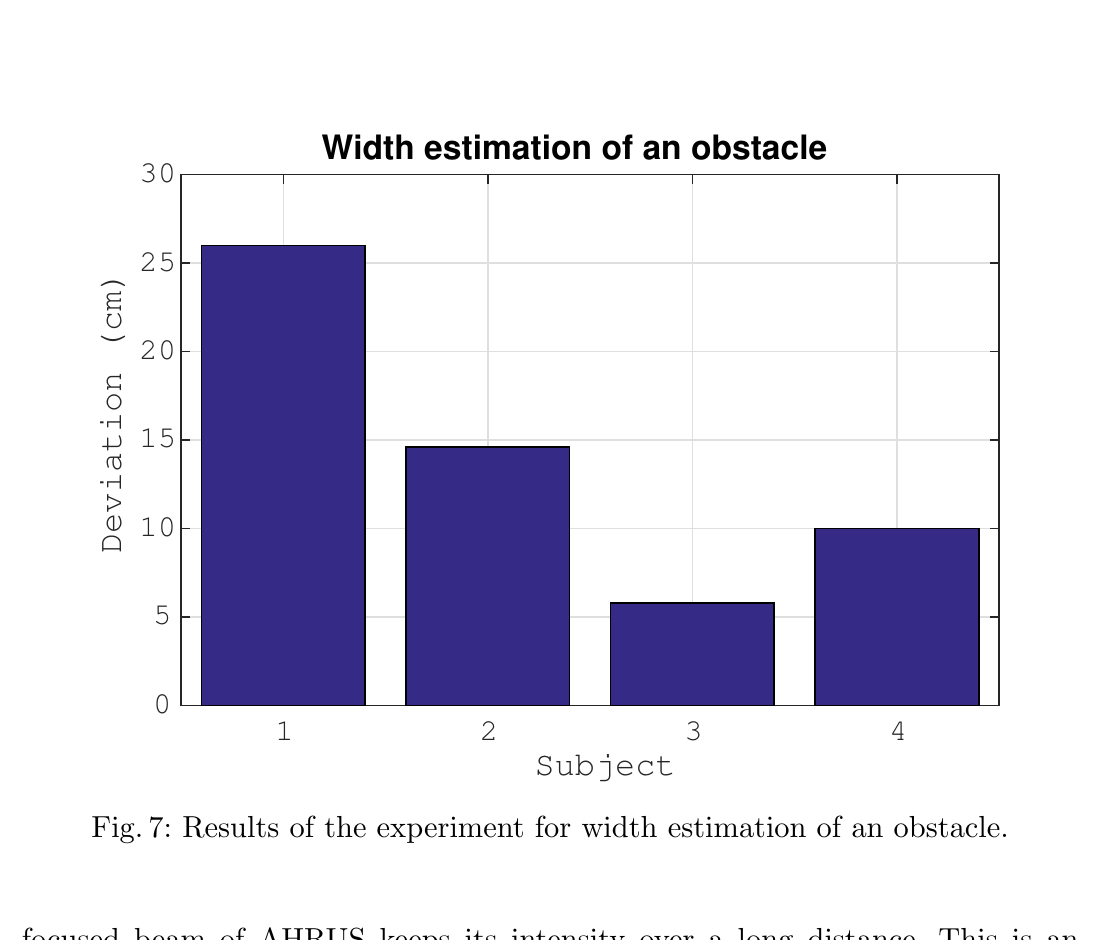

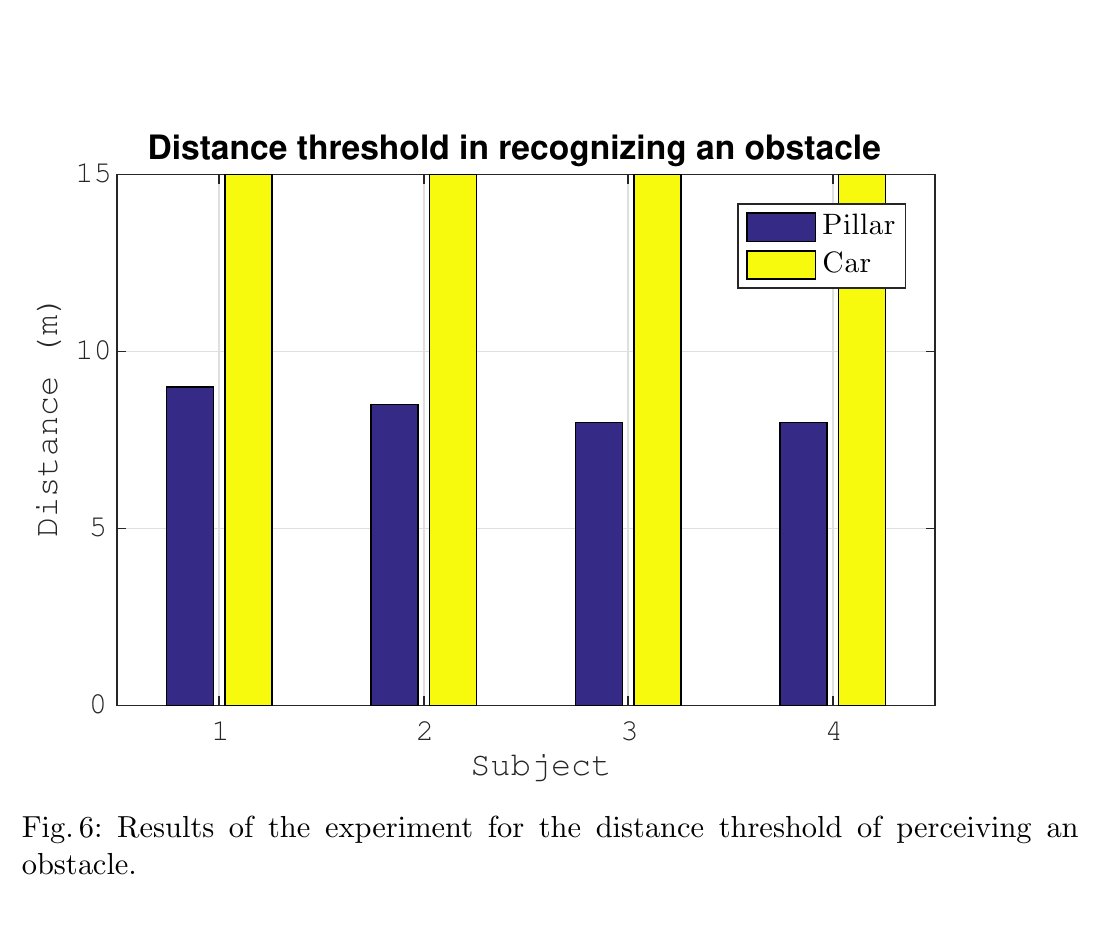

A functional prototype exists. In an initial evaluation with four participants, distance, direction, width estimation, and boundary perception were analyzed and compared with classic flash sonar.

Outputs

- Publication: Ultrasonic Waves to Support Human Echolocation

Figures

13 visuals from the AHRUS paper.

-

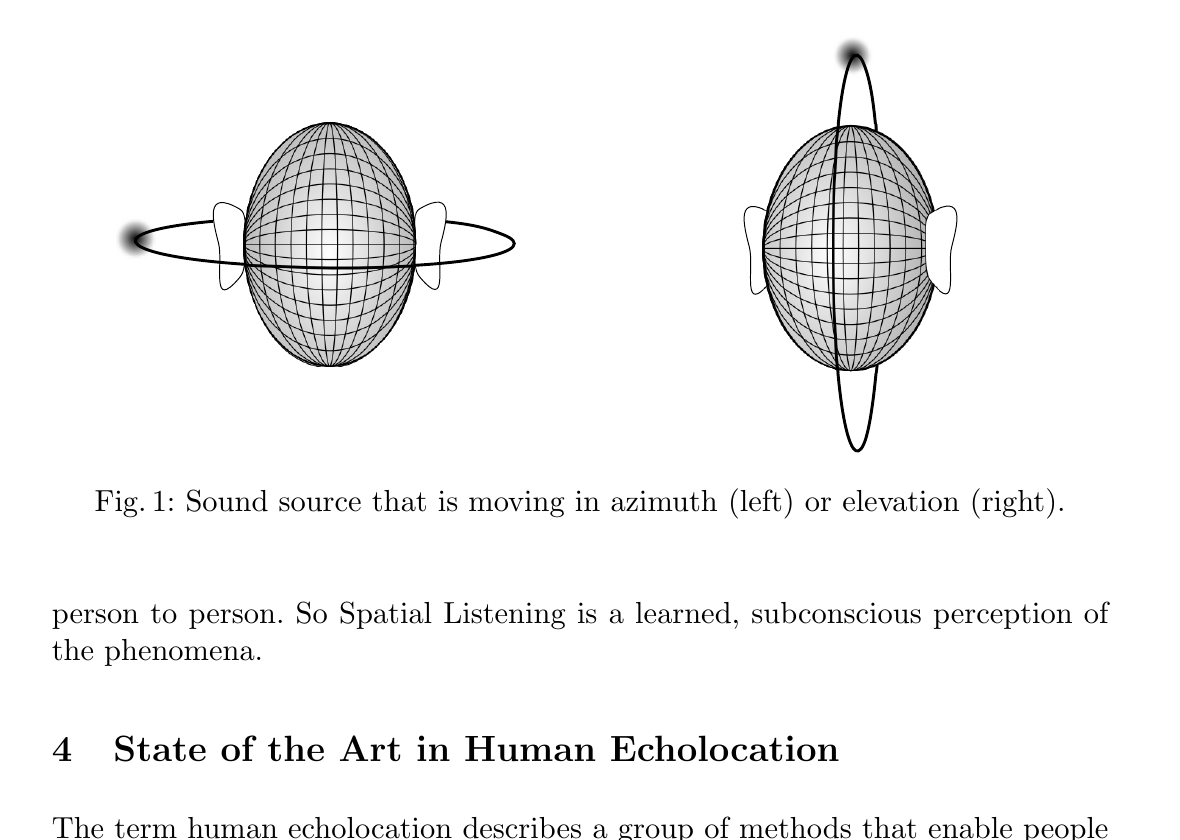

Fig. 1 Overall directional representation. -

Fig. 1 Detail left (azimuth). -

Fig. 1 Detail right (elevation). -

Fig. 2 Principle of ultrasound demodulation. -

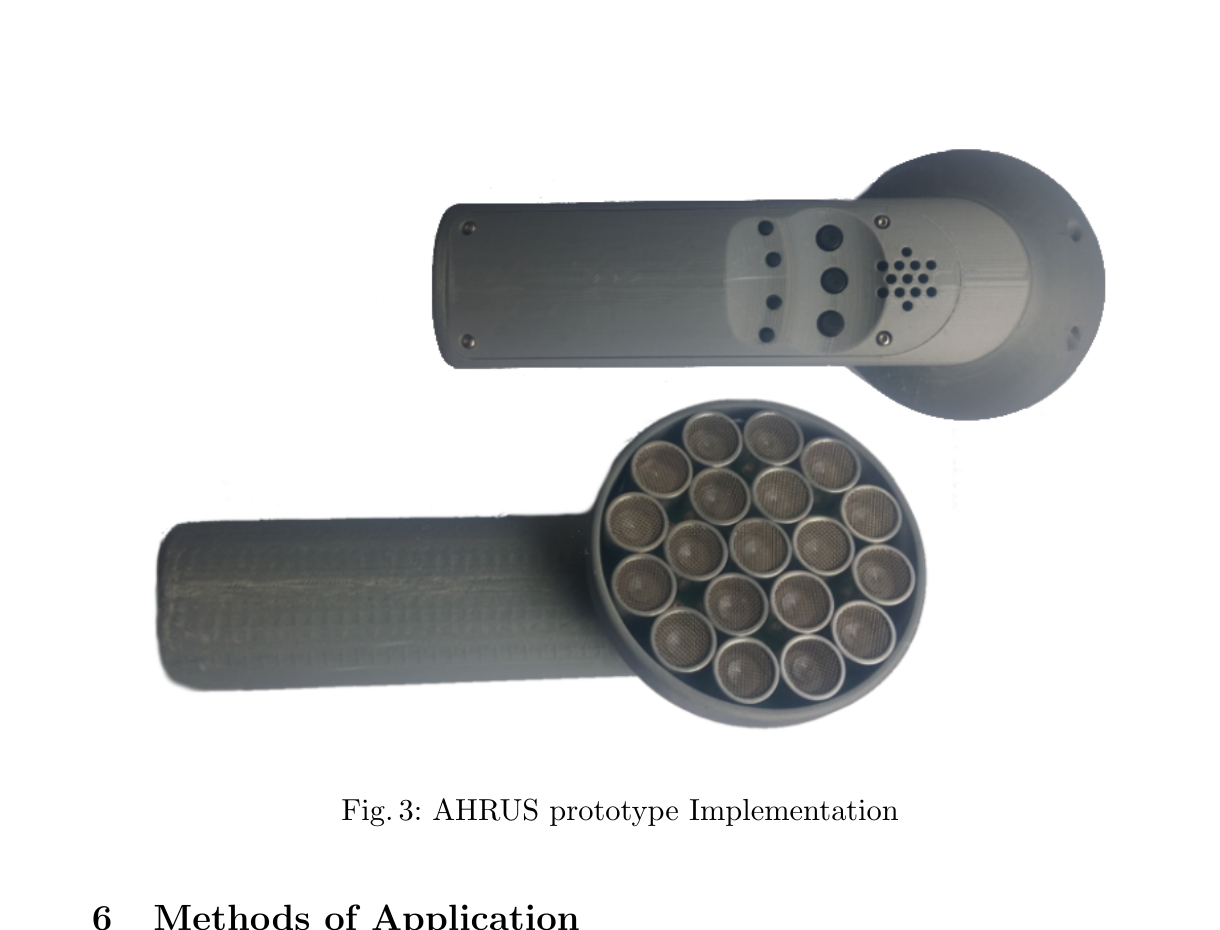

Fig. 3 Prototype implementation. -

Fig. 3 Transducer array detail. -

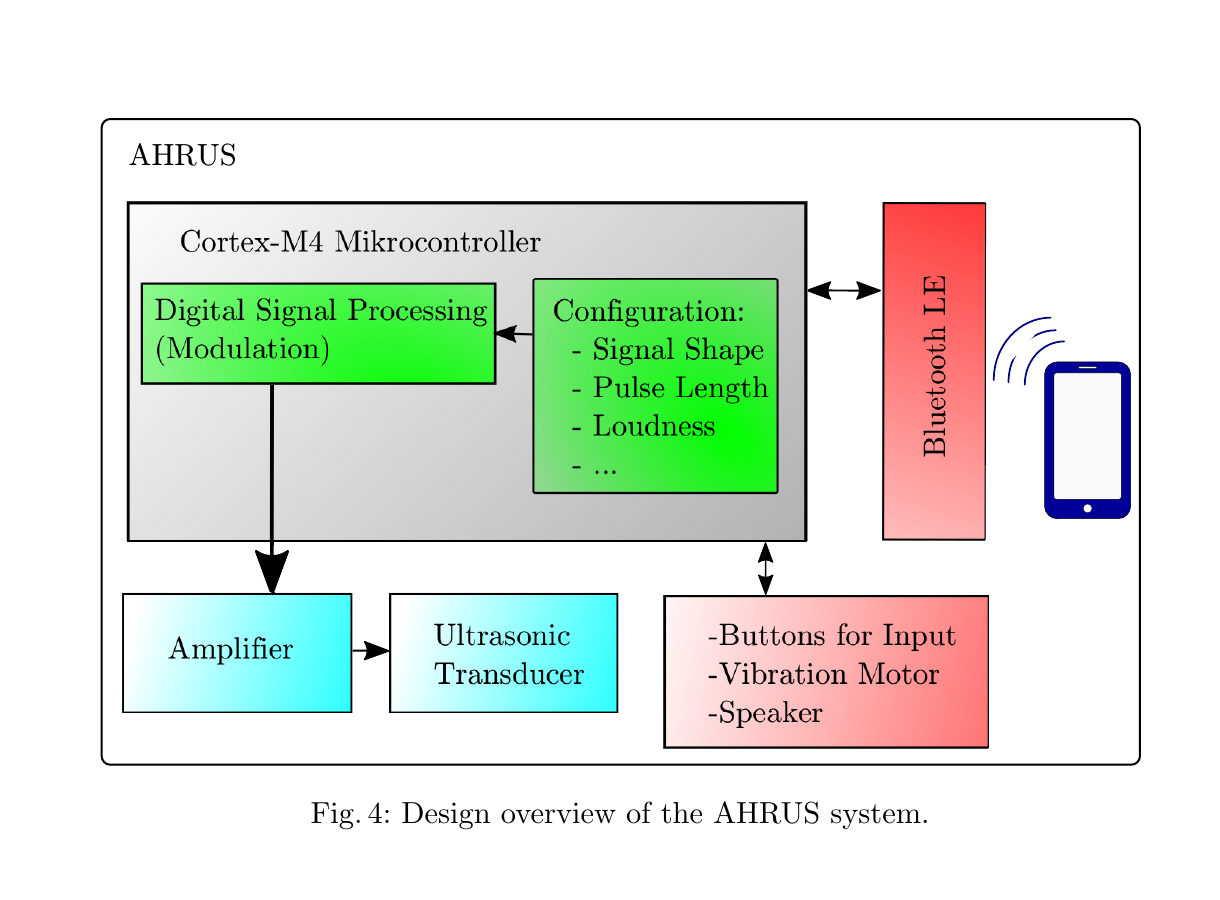

Fig. 4 AHRUS system design overview. -

Fig. 5 Results for distance, direction, and boundary perception. -

Fig. 6 Distance threshold in obstacle detection. -

Fig. 7 Obstacle width estimation. -

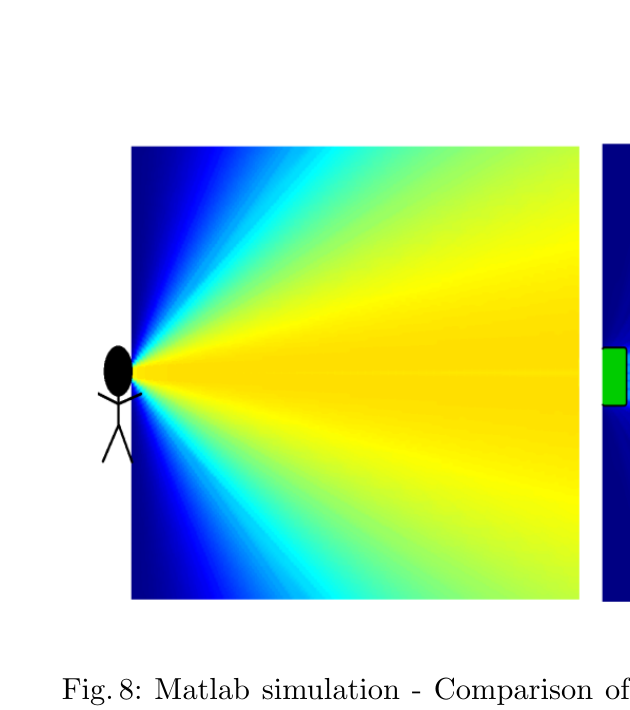

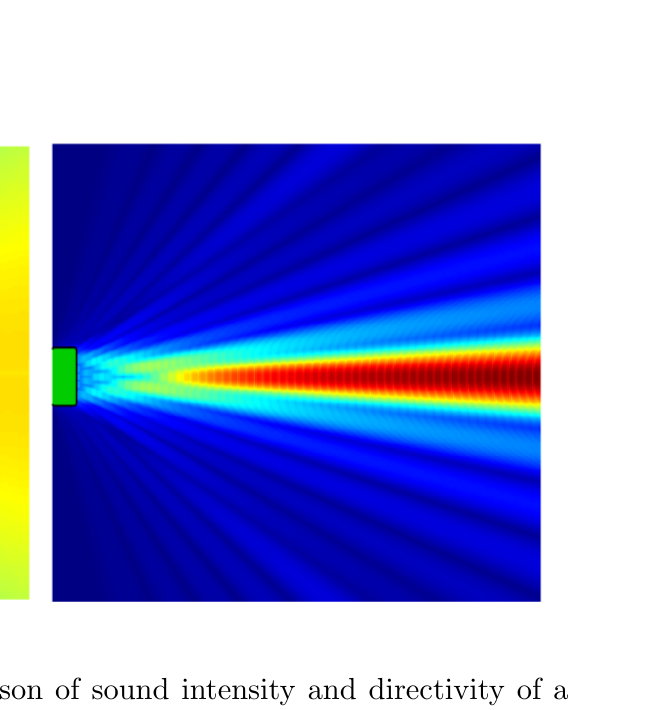

Fig. 8 Overall comparison of directivity. -

Fig. 8 Detail left (flash sonar). -

Fig. 8 Detail right (AHRUS).